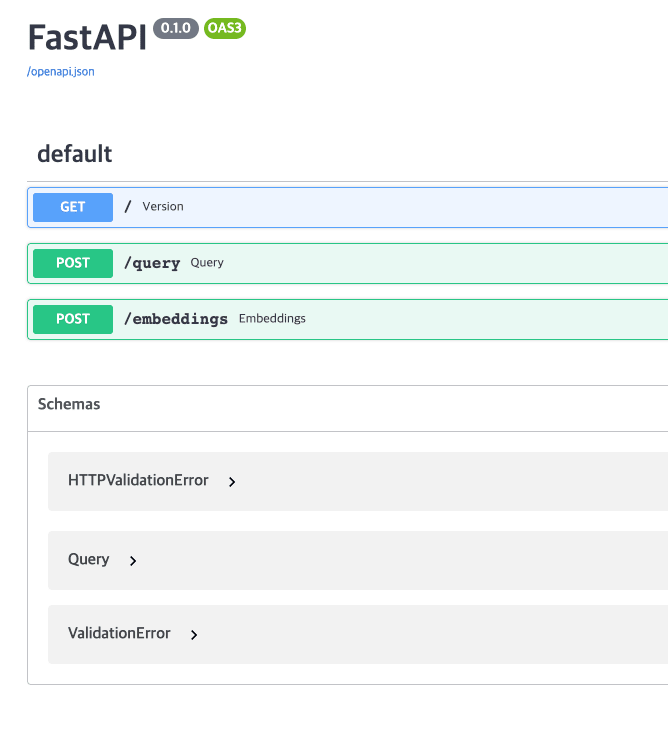

This chapter is testing the Llama 2 easily. I am going to deploy web service using FastAPI and Llama 2. Run web server git clone https://github.com/choonho/llama_server.git cd llama_server pip3 install llama-cpp-python langchain pip3 install fastapi uvicorn Prepare Llama 2 model file In step 2, we created Llama 2 model file, copy to "models/7B/ggml-model-q4_0.bin" Run Server python3 server.py Te..